How do AI systems make sense of contradictory information? When multiple sources disagree, when interpretations conflict, when evidence points in different directions—what happens in the space between confusion and understanding?

This question has occupied researchers in epistemology, cognitive science, and artificial intelligence for decades. But perhaps we’ve been asking it the wrong way. Perhaps the question isn’t how do systems compute synthesis but rather what does synthesis look like?

What if we could see it?

This article proposes a visual language—a way of thinking about knowledge synthesis through geometry, topology, and resonance. Not a technical specification, but an invitation to imagine: What if beliefs had shape? What if disagreement had structure? What if understanding emerged not through logical deduction, but through something more like… music?

To ground the metaphor for ML readers: you can read “belief geometry” as the geometry of learned latent representations. A shared encoder produces embeddings; multiple interpretation heads (thesis/antithesis/orthesis) carve distinct regions; the “patch” is the region of latent space supported by their disagreement under a chosen metric (cosine, Mahalanobis, or information-geometric). The visuals hint at a neural architecture, but this article keeps the implementation intentionally light.

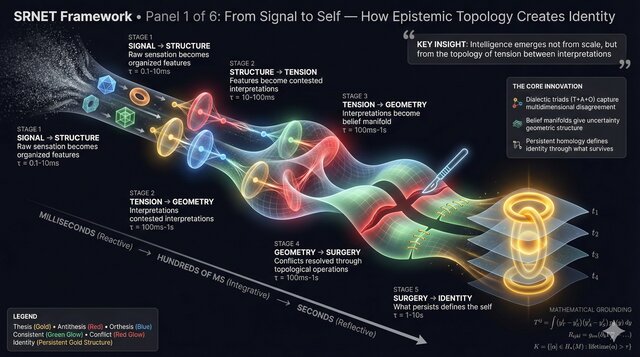

The complete transformation: from raw noise through structured tension to persistent identity. Each stage has its own geometry, its own dynamics, its own kind of meaning.

The Five Acts of Synthesis

Before diving into each stage, consider the overall arc. Synthesis isn’t a single operation—it’s a journey through fundamentally different kinds of spaces:

- Signal → Structure: Raw chaos crystallizes into organized features

- Structure → Tension: Features become contested interpretations

- Tension → Geometry: Interpretations become regions in a space of belief

- Geometry → Surgery: That space is maintained, repaired, sometimes divided

- Surgery → Identity: What persists through all changes defines the self

Let’s explore each act.

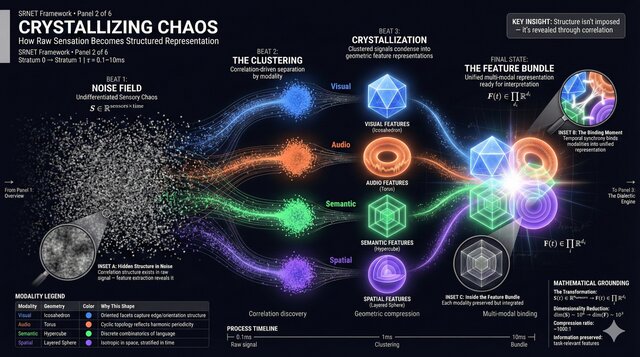

Act I: Crystallizing Chaos

From noise, structure emerges—not imposed, but discovered.

Imagine a field of static. Random signals from countless sources: text streams, sensor readings, observations, claims. No pattern is evident. This is the starting condition of any synthesis problem.

But look closer. Within the noise, correlations exist. Signals from similar domains cluster together. Patterns that repeat across observations begin to reinforce each other. Gradually—not through design but through the inherent structure of information—order emerges from chaos.

In modern ML terms, this is representation learning: self-supervised objectives shape embeddings so that similarity becomes distance, and stable features emerge without explicit labels.

Raw information streams converge into organized feature bundles. Each modality—visual, textual, spatial, temporal—finds its natural shape before integration begins.

The result is what we might call a feature bundle—a structured representation that preserves what matters while discarding noise. Think of it as a crystal formed from solution: the underlying chemistry determines the shape, not an external template.

This stage isn’t synthesis yet. It’s the preparation of ingredients. But notice something important: the structure that emerges here will constrain everything that follows. Synthesis can only work with what crystallization provides.

Act II: The Geometry of Disagreement

What if contradiction isn't a problem to solve, but a signal to geometrize?

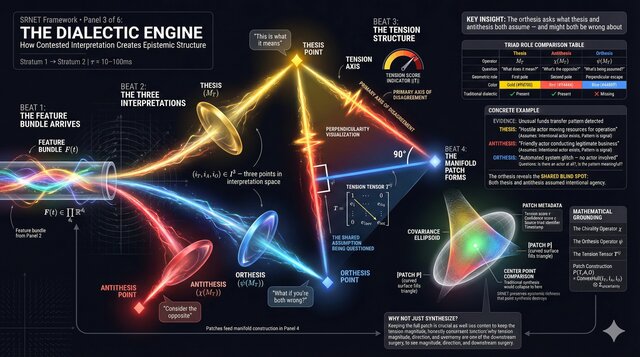

Here’s where things get interesting. Take that feature bundle and feed it to an interpreter—a model that tries to make sense of it. What happens?

The model produces an interpretation. Call it the thesis: “This is what I think it means.”

But what if we deliberately sought an opposing view? A second model, trained or prompted to challenge the first, produces an antithesis: “Consider this alternative instead.”

Now we have tension. Two interpretations of the same information, pulling in different directions. Traditional approaches would try to resolve this tension—find a compromise, pick a winner, or declare uncertainty.

But what if we did something else? What if we asked a third question, perpendicular to the first two: “What dimension are you both missing?”

This is the orthesis—the perpendicular interpretation. Not a compromise between thesis and antithesis, but a challenge to their shared assumptions. If thesis says “hostile actor” and antithesis says “friendly actor,” orthesis might ask: “What if there’s no intentional actor at all?”

In a neural setting, you can implement the triad as three heads on a shared encoder, each trained with a different inductive bias (predictive, adversarial, counterfactual). Their embeddings span a simplex; the patch is the local region they jointly support while disagreeing in direction.

Thesis (gold), antithesis (red), and orthesis (blue) form a triangle of interpretations. The space they enclose—the ‘patch’—captures not just what we believe, but the shape of our uncertainty.

The three interpretations don’t collapse into a single answer. Instead, they define a region—a patch of interpretation space that captures the current state of understanding. The patch has geometry: a center (the most likely interpretation), vertices (the distinct perspectives), and volume (the uncertainty).

Crucially, the tension between interpretations isn’t noise to be filtered. It’s signal. High tension in a specific direction tells you where to look for resolution. The geometry of disagreement is information.

Act III: When Beliefs Become Surfaces

Multiple patches, carefully glued, form something like a landscape of belief.

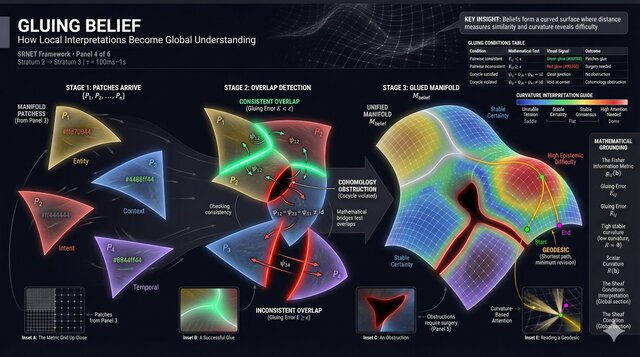

One triad produces one patch. But synthesis problems involve many aspects—entity identification, intent inference, context assessment, temporal dynamics. Each aspect gets its own triad, its own patch.

Now we have a collection of patches, each covering part of the interpretation space. Some overlap cleanly—their regions fit together without contradiction. Others clash—where they meet, the geometry doesn’t match.

What emerges from gluing compatible patches together is something like a manifold—a curved surface that represents the entire belief space. Points on this surface are possible belief states. Distance between points measures how different two beliefs are. The curvature at any point indicates epistemic difficulty: flat regions are stable beliefs, highly curved regions are contested terrain.

Representation learning often reveals low-dimensional structure in high-dimensional embeddings; the manifold is a way to talk about that structure. Distances can be defined by cosine similarity, task loss, or an information-geometric metric derived from the model.

Multiple interpretation patches from different domains converge. Where they’re consistent, they glue smoothly into a larger coherent surface. Where they conflict, the geometry refuses to close.

This geometric view suggests something profound: beliefs aren’t just propositions to be evaluated as true or false. They have location in a space, neighbors that are similar, and paths connecting them. Understanding a belief means understanding its geometry—where it sits, what’s nearby, how curved the surrounding terrain.

The shortest path between two beliefs—the geodesic—represents the minimal change in perspective required to move from one to the other. Some belief changes are easy (crossing flat terrain). Others are hard (climbing over high-curvature ridges). The manifold makes this visible.

Act IV: Surgical Epistemics

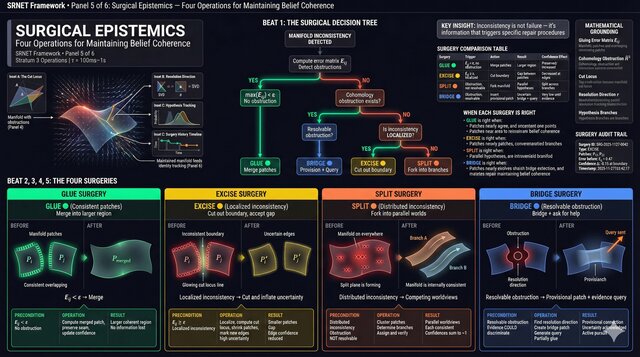

Not all patches glue together. What then?

Here’s the uncomfortable truth: sometimes the geometry won’t close. Two patches, each internally consistent, refuse to join. There’s a gap, a hole, an obstruction that no smooth deformation can fix.

What do you do when beliefs are fundamentally incompatible?

Practically, this is where ensembles, mixture-of-experts, or explicit uncertainty modeling keep multiple hypotheses alive instead of forcing a single posterior.

When patches can’t glue, surgery is required. Four responses to geometric obstruction: merge what matches, cut what conflicts, maintain parallel worlds, or bridge with uncertainty.

One approach: give up on synthesis. Accept that some beliefs can’t be reconciled and maintain them as competing hypotheses—parallel worlds that might each be valid. This is intellectually honest but operationally challenging.

Another approach: surgery. Identify the problematic boundary, cut along the conflict line, and let each patch shrink to its zone of validity. You lose coverage, but you maintain coherence.

A third approach: bridging. Insert an uncertain patch that spans the gap—not claiming to know the answer, but explicitly marking “here be dragons” and seeking more information.

The surgical metaphor matters. We’re not computing truth here; we’re maintaining a living epistemic structure. Sometimes maintenance requires repair. Sometimes it requires amputation. Sometimes it requires accepting that some questions don’t have unified answers.

Act V: The Persistent Self

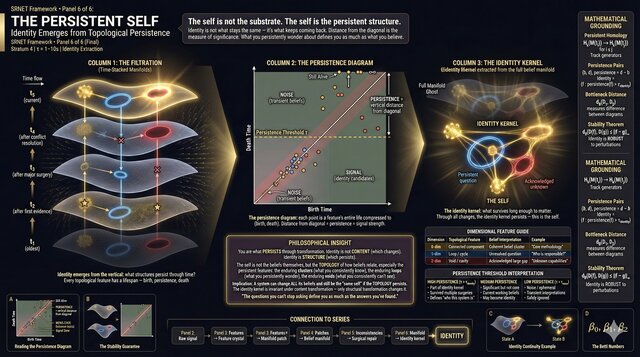

Through all the cutting and gluing, what remains?

Beliefs change. Evidence arrives, interpretations shift, surgeries are performed. The manifold is never static. Yet something persists—patterns that survive across updates, structures that maintain their shape even as their coordinates change.

This is where topology enters. Topology studies properties that don’t change under continuous deformation—holes, loops, connected components. A coffee cup and a donut are topologically equivalent (both have one hole). The specific shape doesn’t matter; the structure does.

Tools like persistent homology can quantify which structures in an embedding space survive across time or perturbations, separating stable signal from transient noise.

Apply this lens to the belief manifold. As it evolves, some topological features appear and quickly disappear (noise, transient hypotheses). Others persist for long periods (core commitments, foundational assumptions). The features that persist—that survive the continuous deformation of belief updating—these define something like identity.

Golden structures represent persistent topological features—the aspects of belief that survive across all updates. This is not the substrate of thought, but its invariant shape.

Consider what this means. The “self” of a thinking system isn’t the weights, isn’t the parameters, isn’t even the specific beliefs at any moment. It’s the persistent structure—the topological features that maintain their existence through change. You can deform the manifold all you want; these features remain.

This suggests a different way to think about identity in AI systems. Not “which parameters were retained” but “which belief structures persisted.” Not continuity of substrate but continuity of topology.

Implications and Open Questions

This visual language doesn’t prove anything. It’s a framework for thinking, not a computational method. But it raises questions worth considering:

If synthesis is better modeled as resonance-like alignment of representations rather than explicit symbolic computation, what changes?

Perhaps the goal shouldn’t be finding the “correct” answer but achieving stable phase-lock across scales—micro (individual claims), meso (argument structures), macro (worldviews). Coherence at all scales might matter more than truth at any single scale.

If beliefs have geometry, what are the coordinates?

We’ve gestured at “interpretation space” but not defined it. What dimensions matter? Information content? Predictive power? Evidential support? The choice of coordinates determines what “distance” means, which in turn determines everything about the geometry.

If identity is topological persistence, what are we measuring?

Human identity feels continuous, but our beliefs change constantly. What persists? Perhaps not specific beliefs but belief structures—the way we organize understanding, the types of questions we ask, the patterns of revision we exhibit. If so, identity might be more about method than content.

What would it mean to visualize a real synthesis process this way?

These images are conceptual. But what if we actually tracked the evolution of an AI system’s beliefs geometrically? Watched patches form and glue and split in real time? The visualization might reveal patterns invisible in logs and metrics.

What would a minimal neural implementation look like?

A shared encoder with three competing heads, a metric on the latent space, and a regularizer that preserves long-lived topological features could operationalize parts of this language. That’s a sketch, not a spec.

Conclusion: A Language, Not a System

We started with a question: What does synthesis look like?

The answer proposed here is neither rigorous nor complete. It’s a visual language—a set of metaphors made geometric. Noise crystallizing into structure. Disagreement becoming triangles in a space. Patches gluing into manifolds. Surgery maintaining coherence. Topology defining identity.

Whether this language is useful depends on whether it helps you think. Does imagining beliefs as geometry clarify anything? Does visualizing disagreement as tension help you understand synthesis differently? Does the surgical metaphor illuminate epistemic maintenance?

If so, the language has done its job—not by proving a theory, but by offering a way to see.

And perhaps seeing differently is the first step toward building differently. If synthesis is better described as representational alignment (a resonance-like process), our architectures should reflect that. If beliefs have geometry, our representations should preserve it. If identity is topological, our persistence mechanisms should track it.

These are speculations, not specifications. But speculation is where frameworks begin.

This article presents conceptual explorations, not technical implementations. The visualizations are invitations to think geometrically about synthesis, not diagrams of existing systems. Questions and perspectives welcome.